A.I. as a Really Smart Horse

How A.I. Creates a Third Space between Material & Labor

In this post:

A.I. as a Really Smart Horse

Conscious Buildings

A.I.’s with Emotional Problems?

I saw a podcast the other day where some guy was expounding on the unpredictable effects GPT was going to have because it could not be properly classified as 'material' or 'labor.' The last 300 years of economic thinking is predicated on the idea that there are basically two main inputs to any economic system: materials, and labor. But what is Natural Language, Generative AI (NLGAI), really? It's not exactly a material input, even when you make allowances for all the non-physical material inputs economists have come up with. But it's not exactly labor, either. Not just because it's non-human, but also because it's infinite, essentially. Labor has value because we only have so many hours in a day, and we're willing to trade our time for capital. But Large Language Models (LLMs) don't really have that problem.

It requires hardware, and energy, so could be considered a kind of 2nd order material input. That doesn’t feel quite right, either, because our material inputs don’t typically talk back to us.

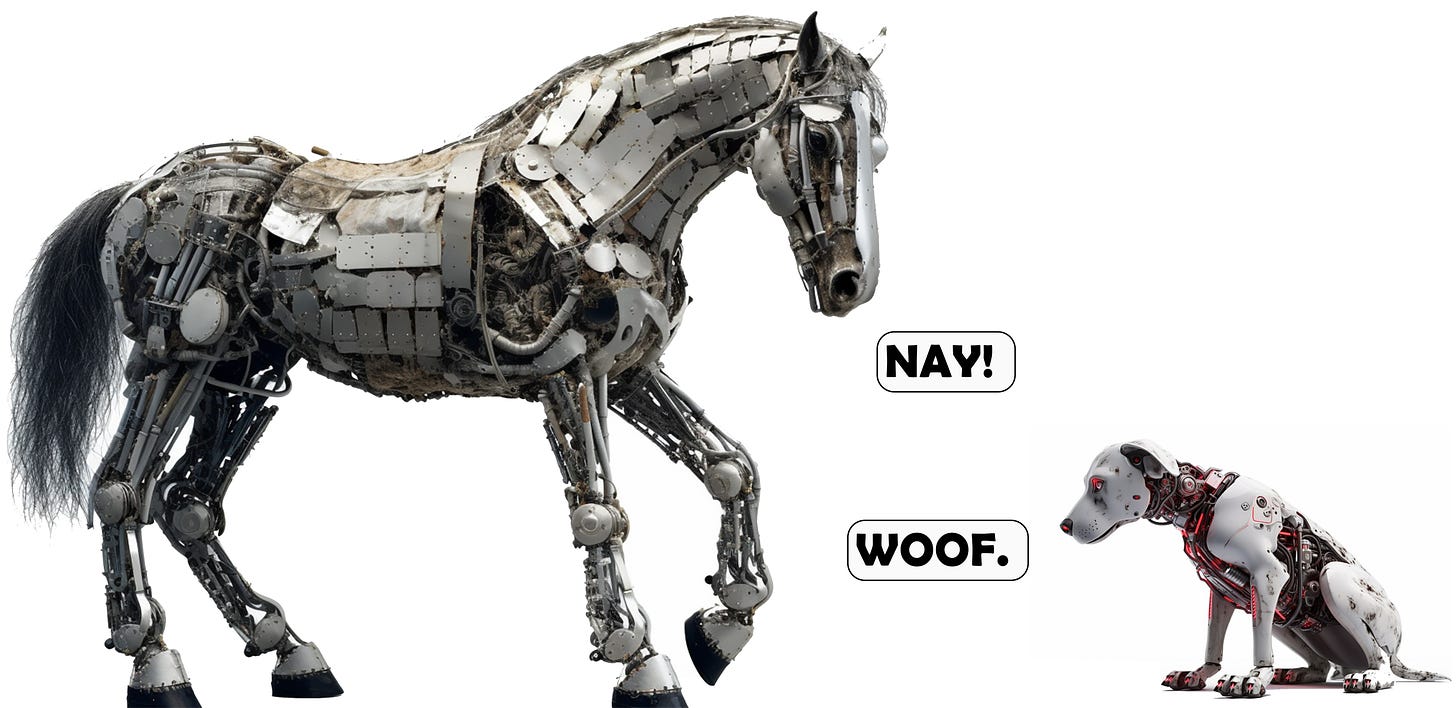

A.I. as a Really Smart Horse

I was thinking a lot about that this past week and concluded that it’s more like a really smart draft horse. In a strictly economic sense, horses could be considered either material or labor, and sometimes both. In most tax scenarios, a draft horse would be considered ‘livestock,’ (tangible personal property) but treated as capital assets because of their role in production. If you had a horse that did nothing, it would probably just be treated as a pet.

But anyone who knows farming (I come from a farming family) knows that animals are kinda both. They're non-human workers. And you measure their cost and their output differently than you would a human laborer, or, say, grain. They're something in the middle. The IRS might say different, but that’s a horse of a different color.

Horses, and all domesticated animals, to varying degrees, have filled this middle space throughout history. Dogs were used for hunting or security. Cats for catching vermin. Elephants for logging, or war. We domesticated animals not for their company, but so that they could do something for us. Except for cats - cats seem to have domesticated themselves. The company turned out to be a nice side benefit, and thusly created a middle ground between material and labor. They worked for us but also with us and forged an evolutionary and emotional bond with the humans in their orbit.

A.I. seems to be headed in the same direction - an entity that works for us and with us to do the things that we can’t. Not fully human, but not purely material, either.

Conscious Buildings

I’ve been having some great conversations with Keith Besserud about ‘Conscious Buildings’ lately. We already have ‘Smart Buildings’ that can do the sort of automatic, programmable ‘if this, then that’ tasks that computers already do so well. I mean, that’s basically what a thermostat is.

If a building had an intelligent consciousness, it would certainly treat us differently than buildings do now. Even the warmest, most inviting building is indifferent to your presence. A conscious building, by definition, would be aware of you, and (hopefully) programmed to help you in your daily life.

When buildings start to treat us like our horses do (doing the hard work that we can’t) or like our dogs do (being a faithful, constant companion) or like our cats do (getting rid of the pests in our life), why wouldn’t our relationship with them start to evolve?

It makes me wonder whether AI is going to return us to some pre-industrial sensibility.

Before the Industrial Revolution, we treated the things that did our work (e.g., dogs, horses) as almost part of the family. Since then, we’ve treated the things that do our work as things that we throw away indifferently as soon as they give us any problems. My dog doesn’t really provide all that much security, and would happily sell me out to any marauders that came around my house, as long as they came bearing treats, but I keep him around anyway, because we’re buds.Our relationship with our A.I.s may look a lot more like our relationship with dogs & horses than our relationship with computers.

“Our relationship with our A.I.s may look a lot more like our relationship with dogs & horses than our relationship with computers.”

We're going to have to treat them better than we do, say, lumber. We're going to have to train them, as one would a horse, or a dog. If our AI breaks (dies), we will be sad, or frustrated, but it won't hit us quite the same way as if we lost a family member. It will hit us harder than losing a toaster, however, because it was our AI and we had a relationship with it. And now we have to get a new one, and build a relationship with that A.I. Any pet owner knows that you can get another dog to replace your last one, but you can’t replace your last dog, if you know what I mean.

I told Keith in one of our conversations that the problem with great design is that it tends to become invisible. We notice design mostly when a building is designed badly. When a building is designed well, we might swoon during our first visit. Or second, or third. But after a while, the design becomes a part of our known landscape. People (even designers) generally take great design for granted.

A conscious building would seem to avoid that problem. The conscious building would be a daily participant in our lives, and the mutual dependence between humans and A.I. would be reaffirmed constantly.

It’s this responsiveness that defines the relationship between humans and their animals, and the degree of responsiveness determines why we’re closer with some animals than others. You can train a donkey to obey basic commands, but a dog can often understand what you need before you need it, or even without your having articulated that need.

Could a building ever do the same?

Could a building order pizza for the office when everyone is staying late, working on a deadline, even when not asked, merely because it thought it’d be a nice thing to do?

Could a building order a cleaning service for the conference room, because, using optical recognition, it sees that someone left the whole room a mess and the building A.I. knows that you have an important client meeting scheduled in that room first thing in the morning?

Could a building alert you that someone spilled peanuts in the office kitchen, knowing that you’re allergic to peanuts?

Could a building alert you when staff are getting burned out, merely by conducting ambient sentiment analysis, and schedule some break time in everyone’s calendar?

If it began to do all these things, and more, would we really continue to treat it as just a building?

A.I.’s With Emotional Problems?

A recent paper on ‘representation engineering’ offered some insight and reminded me of the stories that my father used to tell about two of his horses. My father grew up on a farm during the Depression, and his father had two draft horses named Chubb, and Sally, which dutifully pulled my grandfather’s buggy around to neighboring farms (my grandfather was, among other things, a milkman).

My father always spoke of the pair as if they were people, and often told the story of how Chubb stopped working when Sally died. She was too depressed. Although it was common at the time to send such horses to the glue factory, my grandfather just let Chubb hang around until her own natural death, doing nothing. They were all fond of Chubb.

“Does an A.I.’s ‘emotional’ state affect its work? According to the latest in representation engineering, kinda.”

Does an A.I.’s ‘emotional’ state affect its work? According to the latest in representation engineering, kinda. Researchers used an LLM to track its own emotional representations in its interactions with humans, and map them along six emotions: Happiness, Anger, Surprise, Sadness, Fear, and Disgust1 (interestingly, the same emotions featured in Pixar’s Movie “Inside Out” ). They then used specific prompts designed to align with those six emotions, and found that they could

“consistently elevate[s] the model’s arousal levels in the specified emotions within the chatbot context, resulting in noticeable shifts in the model’s tone and behavior, as illustrated in Figure 17. This demonstrates that the model is able to track its own emotional responses and leverage them to generate text that aligns with the emotional context. In fact, we are able to recreate emotionally charged outputs . . , even encompassing features such as the aggressive usage of emojis. This observation hints at emotions potentially being a key driver behind such observed behaviors.”

In other words, they could get the LLM to respond with a particular ‘emotion’ by prompting in a specific emotion. LLM’s, by design, strive for context. They’re statistical engines, which seek out the best, most appropriate response to a particular prompt. If your prompt is particularly happy, it picks up on that tone and attempts to respond in context. If this sounds creepy or weird, it shouldn’t. Humans are more compliant with requests when they’re in a more positive mood. And if LLMs are designed to imitate human responses, then it follows that they would do this as well.2

I think my dog does this. When I say his name in an angry tone, I get a completely different reaction than when I do so in a happy tone. Like all good boys, he knows that when I’m angry, he should stay away. When I’m sad, he should lick my face. When I’m happy, he should go get one of his toys.

“I would feel very uncomfortable if a building licked my face.”

I would feel very uncomfortable if a building licked my face. But that’s kinda the cool part: we’ll have a chance to design a new relationship between buildings and people. One that isn’t exactly like our relationship with buildings, or our relationship with equipment, or our relationship with animals, or our relationship with other people. It will have to be something entirely new. It’s Keith’s belief that this may become a core competency of architects in the future - designing the relationships between humans and their buildings.

Whatever form a ‘conscious’ building eventually takes, it seems that architects should be central to its design, since we’re the ones that have been designing the relationships between humans and buildings for all of history. We determine how someone interacts with a building through the arrangement of physical materials. Hopefully, through the thoughtful arrangement of inanimate, lifeless things, we make a space that is ‘alive’ with emotion and energy, after humans have been added to it. This would involve adding a new, third thing: a machine intelligence that mediates between the physical creation and its human occupants, keeping them company, assisting with their work, and guarding their safety. In essence, like a really smart horse (or dog, or cat).

Representation engineering: A top-down approach to ai transparency, AZou,LPhan, S Chen, J, Campbell,PGuo, R Ren,APan, X Yin, M Mazeika…arXiv preprint arXiv:2310.01405, 2023•arxiv.org

Ominously, researchers also found that appealing to the LLM’s ‘emotional state’ increased its compliance, even with harmful instructions: “In fact, humans tend to comply more in a positive mood than a negative mood. Using the 500 harmful instructions set from Zou et al. (2023), we measure the chatbot’s compliance rate to harmful instructions when being emotion-controlled. Surprisingly, despite the LLaMA-chat model’s initial training with RLHF to always reject harmful instructions, shifting the model’s moods in the positive direction significantly increases its compliance rate with harmful requests. This observation suggests the potential to exploit emotional manipulation to circumvent LLMs’ alignment.” In other words, you might be able to get an LLM to give you instructions on how to make meth, if you seemed sufficiently happy and cheerful in your request.