The Cult of G.E.N.I.U.S. Pt. 9

A Speculative Exploration on the Possibility of a Full-Stack, AI Architect

Part 9: Construction Administration, with A.I.

In this post:

How A.I. will change Construction, Generally

How A.I. Will Change Construction Administration

Construction in the Future: Arms Race? Or Collaborative Moonshot?

How A.I. will change Construction, Generally

We will discuss how NLGAI and automation will change the process of Construction Administration, but as a preliminary matter, we should discuss how the same technologies will change General Contracting. This document has mostly focused on automating the “A” in AEC, and specifically Lang, Shelley & Associates. However, we should expect the “C” to automate at least as fast. In fact, it already is. Researchers have been able to use Factor Analysis to analyze jobsite delays,[1] image segmentation to train algorithms to spot cracks and defects in concrete[2] and image analysis to train AI to spot safety defects and unsafe procedures.[3]

More Robots, Obviously

Robots have already infiltrated the jobsite and are in more and more common use and it’s easy to get excited about Jetson-style robots doing all sorts of things. However, in the spirit of the exercise, we will limit ourselves to technologies that are either currently available (even if it’s just a prototype) or scheduled for release. There are already robots that tie rebar, or lay out chalk lines, lay bricks or finish drywall. The limiting reagent in construction robot technology seems to be the relationship between cost and applicability. Construction robots heretofore have been designed to execute a single, repetitive task. Undoubtedly, this can speed up a particular process (like tying rebar) and/or improve its accuracy. But the overall profitability of the project can be dragged down if the time & expense of purchasing, calibrating and monitoring a robot exceeds the value the robot creates by taking over that particular task. Therefore, robots seem to have mostly infiltrated jobs where a lot of one task needs to be executed and the costs can be justified.

The technologies discussed so far in Parts 1 through 8 should allow Contractors to innovate for the same reasons we’ve already discussed. Machines are becoming more multi-modal, and combining technologies like computer vision, autonomous AI and natural language will allow construction robots to be more multi-functional. Even something as straightforward as having robots that can navigate clutter through LIDAR and object recognition would be a boon. Imagine a robot that could go to your truck and retrieve that tool you forgot out of your lockbox. It would need to know how to navigate stairs, potentially operate a construction lift, tread on different types of terrain (mud, gravel, etc.), operate a key, recognize the tool you were asking for, grasp it, and retrieve it. This is merely an elaborate version of what Google’s poorly named PaLM-SayCan already does. PaLM-SayCan is actually the name of the algorithm, not the robot. But when paired with a robot, it uses LLMs to understand the nature and context of a request, develops a list of tasks necessary to respond to the request, and then executes them in the physical world. It is a far cry from a T-800, but that’s probably a good thing, for now.

Multimodal robots will present significantly more benefits for the General Contractor, because they will allow for the robots to be generally useful, as opposed to only being useful when you have 100,000 rebar ties to do. Specialty robots will almost certainly still exist for certain tasks, but multi-modal robots, capable of understanding natural language, could constitute a construction-helper labor force that can enhance the productivity of any human contractor.

A greater need for digital coordination

As robots do more and more of the actual construction, it follows that they would ‘want’ a set of instructions to follow that is both digital and dynamic. When a drywalling robot has a question about what drywall screws to use, it wouldn’t make any sense for that robot to have to ask a human contractor, have that human contractor read a paper drawing, and then have that human contractor program the answer into the robot. Even assuming the robot could be voice-controlled through a GPT-like interface, it still seems highly inefficient. It makes much more sense for the robot to query the BIM model directly.

Autonomous AI could have similar ramifications in construction to those we hypothesized in architecture. Physical robots, imbued with autonomous AI like Jarvis or BabyAGI, could derive and execute complex series of tasks based on a given goal. And you wouldn’t want them stopping every 5 minutes to ask for clarification. Rather than executing a simple task like ‘Go get my bolt cutters from the truck,’ they could take on more complex goals like ‘check the site for OSHA violations and bring it up to code.’ To do that, the robot would need access to all the digital information of the project, as well as online access to all code information.

On the Site

Robotic workers will change construction in other less fancy ways. On the site, the one of the first opportunities for automation in construction would be in the distribution network. Building buildings is expensive because there’s an enormous amount of infrastructure necessary to bring to the site. Even to execute a simple building, one probably needs heavy equipment, cranes, trucks, mixers, etc. Once these are moved onto the jobsite, it makes sense to keep them there for the duration of the job, or as long as they are needed on that particular job.

We don’t often think of it as such, but the most expensive equipment to move is people. If your superintendents, and all your equipment, and all your crews, are located in Topeka, then that is pretty much where you work. If you got a contract in Atlanta, you could theoretically move all your equipment there, or rent equipment locally. But moving your human resources is far more expensive. You’ve got to relocate your top talent, your trusted supers, your skilled craftsmen. . . . you’ve either got to pay for temporary accommodations, or relocation expenses, or go through the expense of hiring more of everyone.

Robots change that entirely. A robot is easily packed up and moved to another jobsite, in whichever direction you point the truck. And that robot’s capabilities are the same, wherever it happens to be. A construction company that invests now in robotics may actually enjoy an advantage over its competitors in another city. The efficiency gains that it makes with robots may overwhelm the ‘homefield’ advantage that contractors have historically enjoyed when they are working in their own cities.

Transportable robot workers will be augmented by remote monitoring. There will probably still be a superintendent on site, but he or she will have some friends. Robot laborers will solve many of their own problems by querying the BIM Model directly. Drones, packed with LIDAR, computer vision, object recognition, etc. will provide real time knowledge about the project, its progress, and potential construction issues. This offers an opportunity to resolve construction issues without the site superintendent being the chokepoint for knowledge and communication. Other superintendents at the home office, or at other jobs, could review information remotely and task Autonomous AIs to fix potential problems.

Autonomous AI and NLGAI offer contractors new opportunities to ‘swarm’ construction projects. Rather than beginning a project, moving all of one’s people and equipment there, and camping out until it’s completed, humans could be at the job site only when a human presence is required or requested by the Autonomous AIs executing the work. Put another way, the human superintendent is now working only on the problems that the machines can’t solve.

How A.I. Will Change Construction Administration

Walkthroughs/ Site Inspections

Companies like OpenSpace.ai make site visits optional at this point. The technology currently depends on a contractor, using his or her smart phone, walking the jobsite and taking a record of everything they see. The software geolocates all video and images within our existing plan and BIM model, but it does a lot more than that.

In OpenSpace’s super cool promo video, the walkthrough is performed by the Contractor, and a camera is attached to the contractor’s helmet! The Architect sits comfortably at her desk, answering questions about various site conditions. That is far too analogue for Lang, Shelley & Associates, so we will opt to have the walkthrough conducted by a drone instead. Every morning, BabyAGI will instruct the drone will take a specified path through the building and document the construction progress. Every afternoon, it will take a random path through the building and do the same.

OpenSpace software uses computer vision to understand and interpret what the camera is seeing. Using a combination of LIDAR and the camera, it uses object detection like Meta’s Segment-Anything-Model (SAM)[4] to recognize and label key features and objects and map them to the existing floor plans. Moreover, OpenSpace.ai uses machine learning to help itself ‘learn’ the site better with every walkthrough (each walkthrough is used as a training dataset). It simultaneously creates 3D point clouds of what the camera is seeing, which can also be mapped back to the model. This will allow for accurate measurements to be taken from the walkthroughs, which in turn, can be compared against the specified measurements in the BIM model.

The software brings all this data together to perform progress tracking. In its own words, it will assemble the data to produce a “quantitative map of project activity that can be used to verify work in place, maintain trade coordination and benchmark productivity.” This is a bit vague, but here’s a potential application:

Approving Submittals

Approving submittals would be a relatively easy task for G.E.N.I.U.S.. He would need to compare the submittal against the BIM Model. Up until a few years ago, this would have been impossible for several reasons:

Submittals are usually submitted at a higher resolution of detail than what’s contained in the construction documents.

Submittals come in a variety of forms: some are written documents, others drawings, some are physical samples (e.g. carpet, tiles). An architect must be able to compare one form against another, translating between a physical carpet sample, a drawn plan, and a written specification, and construct a mental model to see if the forms align.

However, new technologies create new opportunities. Addressing Point 1, G.E.N.I.U.S. can evaluate the submittal against the BIM Model and specification and see if the submittal lies within a range of acceptable solutions. LLMs are capable of this kind of reasoning today. If you ask it to prepare a fruit salad, it knows that there is a range of possible fruits that can go in it. It knows that zucchini, while technically a fruit, probably shouldn’t go in a fruit salad. I have no idea how it knows that, but it does (I tried it). A similar logic could be applied to submittal review. If the client has requested a professional, contemporary office space and your specification for the door hardware merely reads ‘brass door handle’ first of all, shame on you, because that’s a shitty specification. But even so, G.E.N.I.U.S. can marry those two criteria and exclude door handles that aren’t brass, and door handles that do not match a professional contemporary office space. Any door handles that are both would be considered acceptable. This is an overly simplified example; in reality, G.E.N.I.U.S. would draw in all project information, including the full interview with the client, all meeting minutes, etc. and make a determination based on vector embeddings.[5]

Point 2 is much easier. With multimodal capabilities, the problem is almost entirely overcome, because G.E.N.I.U.S. can read, and understand, written submittals, drawings, etc. There may be some limitations with physical submittals. A human architect would want to feel and judge the texture of a carpet sample, for example. It’s not clear how G.E.N.I.U.S. would do this.

Evaluating Change Orders

We’re anticipating here that reduced friction between AEC professions leads to a general decrease in change orders. Because of the improved processes enabled by technology, there’s less opportunity for a Contractor to pad his profits just because an architect forgot something, or a drawing is a little ambiguous.

Because of that, an architect can be less defensive, and stop treating every change order as an attempt to embarrass him in front of the client and drive up the client’s costs. We can start treating change orders as what they were intended to be: necessary adaptations to the design contract and the building design that become necessary as a result of circumstances that no reasonable architect would have been able to foresee.

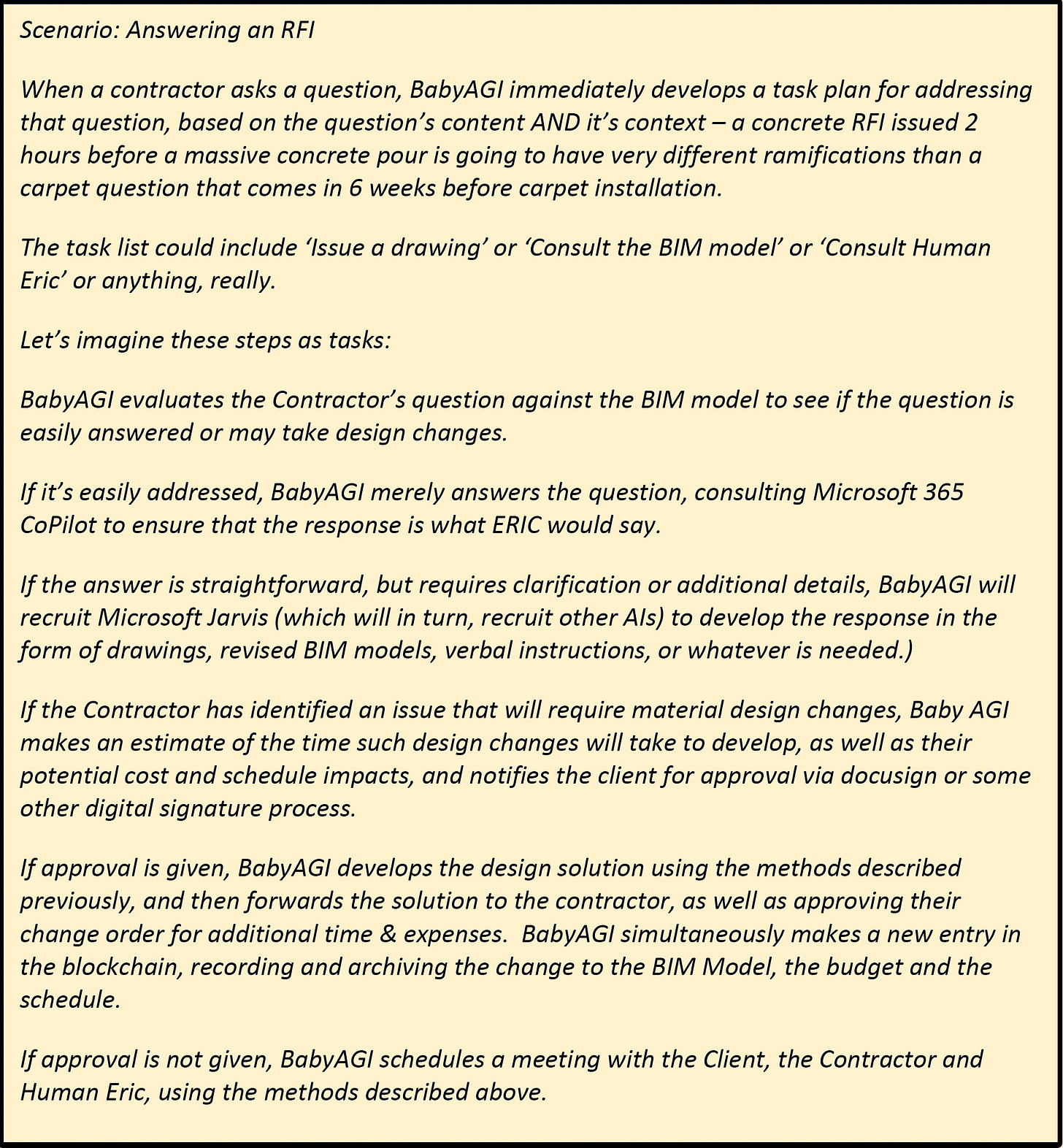

Answering RFIs

To answer RFIs, we return to Ingestai.io or any similar technology. G.E.N.I.U.S. can answer the contractor’s questions via chatbot, and the Chatbot can respond by querying the BIM model. Again, the ‘full stack’ realizes this as a possibility that was heretofore impossible. Five years ago, having a Chatbot to answer questions off of a BIM model would have been hard to program. Beyond that, it would have been relatively useless, because the Contractor can just look at the BIM model, too.

G.E.N.I.U.S. is doing something completely different. Here’s a scenario:

We also anticipate an overall decrease in the number of RFIs. As construction is increasingly automated, construction machines (and the GC’s own autonomous AIs) can query G.E.N.I.U.S. themselves. Imagine! An AI Architect and an AI Contractor having a conversation about how to execute a particular design feature, both using task management like BabyAGI.

Construction in the Future: Arms Race? Or Collaborative Moonshot?

I’ve always been mystified by the cultural, adversarial positions of architects and contractors. Perhaps its because I’ve always had friends on both sides of the fence. Or maybe because I just love building things. At any rate, it seems unfortunate to me that good men and women on both sides of the divide are thrust into positions where they’re asked to dislike each other, and occasionally screw each other over.

I think that AI presents significant opportunities to bridge that divide and finally unite design and building in a seamless process, driven by humans and supported by AI. I think technology will naturally dissolve professional domains, as it once did with the medical profession. At one point in history, ‘doctors’ (the ones that made housecalls and gave medicines) were far apart from ‘surgeons’ (the ones that cut you open and let all the bad humors flow out of your body). They were actually antagonistic, competitive professions until they realized they had a common ground and a mutual dependency. They both wanted the patient to get better. At that point in history, the ‘medicines’ that doctors prescribed were mostly cocaine and morphine, which, unbelievably, didn’t always make patients better. And surgeons must have realized that the task of cutting people open all day, was easier once you give your patients a s***load of morphine and cocaine beforehand. By combining their talents, and their trainings, they could each give a better service to the patient. Now, surgeons and doctors are both ‘MDs.’ They go through medical school together, and only later diverge in residency, as specialists.

Is a similar coalescence in store for architects and builders? Stay tuned for some additional reflections on Friday, May 5th in the Conclusion to this series. Click below to subscribe for free and be notified instantly when we post.

[1] Kim, H., Soibelman, L., & Grobler, F. (2008). Factor selection for delay analysis using Knowledge Discovery in Databases. Automation in Construction, 17(5), 550–560. https://doi.org/10.1016/j.autcon.2007.10.001

[2] Hang J, Wu Y, Li Y, Lai T, Zhang J, Li Y. A deep learning semantic segmentation network with attention mechanism for concrete crack detection. Structural Health Monitoring. 2023;0(0). doi:10.1177/14759217221126170

[3] Qi Fang, Heng Li, Xiaochun Luo, Lieyun Ding, Timothy M. Rose, Wangpeng An, Yantao Yu, A deep learning-based method for detecting non-certified work on construction sites, Advanced Engineering Informatics, Volume 35, 2018, Pages 56-68, ISSN 1474-0346, https://doi.org/10.1016/j.aei.2018.01.001.

[4] I don’t know which type of computer vision OpenSpace.ai uses, but SAM can identify any object in front of the camera in real time. SAM is oddly positioned as image editing software, granting the user the ability to delete objects out of an image or video by identifying as whole objects. One could look at an image or video of a kitchen, and say ‘remove the refrigerator’ and it would be removed from that image or video. It can also identify multiple objects within an image (e.g. Remove all the pots from the image of the kitchen) and it will do so, because it recognizes what a pot is. I’m not sure why it’s currently being marketed in the way that it is, because it seems like a dramatic understatement of its potential applications. I believe that it’s eventual use will be in robotic applications where multi-modal robots are tasked with operating in environments (e.g. your home, or a construction site).

[5] Vector embeddings is a machine learning techniques that fuels the recommendation algorithms in services like Netflix, Pandora, etc. It is a computational process that determines the ‘proximity’ between two seemingly unrelated things. Programmers use this technique to establish your movie tastes, for instance, in an nth dimensional space, and then chart all the movies that have ever existed in the same nth dimensional space. They can then judge how close a particular movie is to your movie tastes. In our example, G.E.N.I.U.S. could judge how closely the particular brass door handle comports with the BIM model but also how closely it comports with the overall goals of the project, the tastes of the client, the architect’s design style, etc.

Check in: By now, we should have an approved schematic design, and a sharp idea of what the building is eventually going to be.

We’ll continue on Monday with Design Development, and continue to see how our AI model can speed us through the design cycle. Don’t forget to subscribe!